2026 Challenge Track Description

Track 1: Multi-Camera 3D Perception (Sim2Real)

Overview

Challenge Track 1 tackles multi-camera 3D perception in large-scale indoor environments, requiring participants to detect and track people and mobile objects, including autonomous mobile robots (AMRs), humanoids, forklifts, and pallet trucks while maintaining consistent identities within and across all cameras in a scene.

Building on the 2025 edition, which introduced over 500 synthetic camera views generated via NVIDIA Omniverse along with 2D/3D bounding boxes, depth maps, and detailed calibration metadata, the 2026 edition advances the benchmark in three key directions. First, the synthetic training corpus is further expanded in scene diversity and annotation fidelity, now generated using the Isaacsim.Replicator.Agent (IRA) and Isaacsim.Replicator.Object (IRO) extensions on the NVIDIA Omniverse platform, as well as Cosmos Transfer 2.5 (CT2.5), covering diverse warehouse layouts; participants are allowed to generate more data using these tools. Second, real-world test sets are introduced to explicitly evaluate Sim2Real generalization, pushing participants beyond purely synthetic benchmarks toward deployable perception systems. Third, depth maps are provided only for training and validation; participants must develop models that rely solely on RGB inputs at inference time, reflecting the limited availability of depth data in real-world CCTV scenarios.

Evaluation continues to use the 3D Higher Order Tracking Accuracy (HOTA) metric, which jointly balances detection, association, and localization quality. Submissions demonstrating online tracking, relying only on past-frame information, receive a +10% multiplicative bonus when determining the final winner and runner-up.

Task

Teams should detect every object and keep the same identity ID while they move within and across all cameras in a scene.

Submission Format

For compatibility with the official evaluation server, results must be a single plain-text file (track1.txt) where each line describes one detection:

〈scene_id〉 〈class_id〉 〈object_id〉 〈frame_id〉 〈x〉 〈y〉 〈z〉 〈width〉 〈length〉 〈height〉 〈yaw〉

| Field | Type | Description |

| scene_id | int | Unique identifier for each multi-camera sequence. |

| class_id | int | Starting from zero, denoting an object’s category. (Person→0, Forklift→1, NovaCarter→2, Transporter→3, FourierGR1T2→4, AgilityDigit→5.) |

| object_id | int | Positive, unique ID per scene & class. Remains constant across all cameras within the same scene and class. |

| frame_id | int | Zero-based frame index within that scene. |

| x, y, z, | float | 3D coordinates of the bounding-box centroid in the world coordinate system which is in meters. |

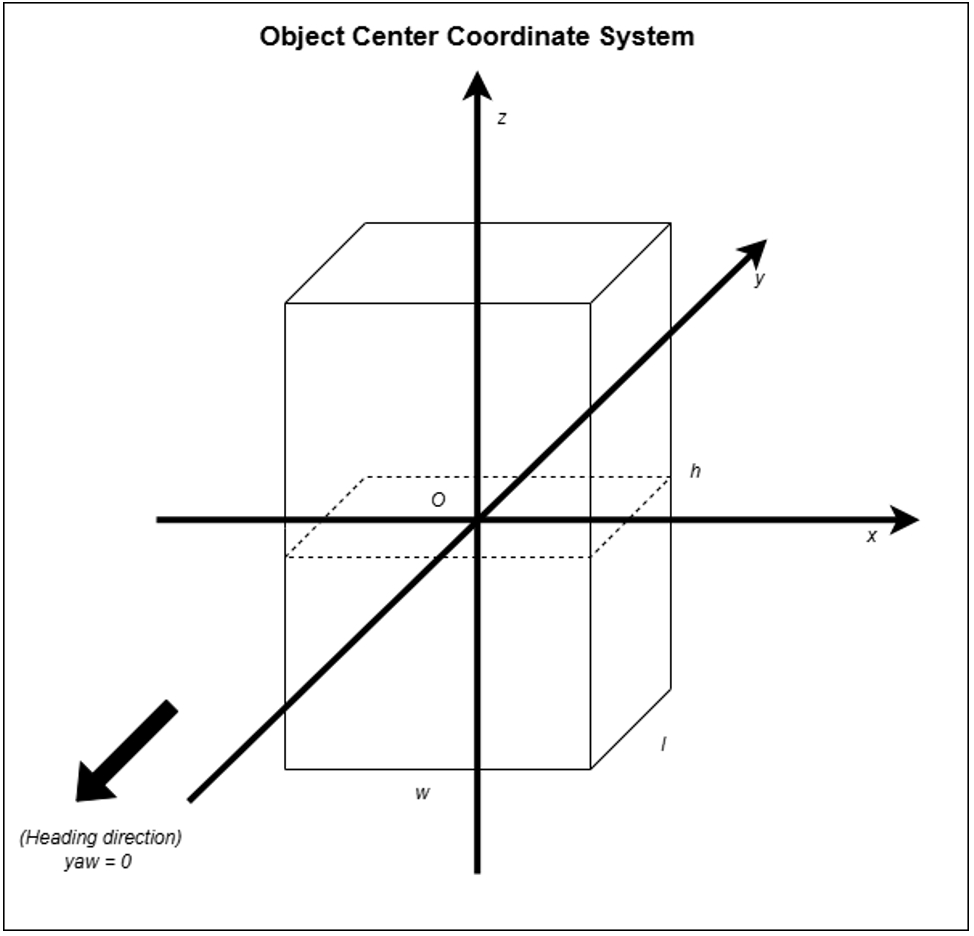

| width, length, height | float | Box dimensions in meters along its x (width), y (length) and z (height) axes of the object-centered coordinate system, with the origin at the centroid. |

| yaw | float | Euler angle in radians about the y-axis of the object-centered coordinate system defining the box’s heading in the world coordinate system. (Pitch and roll are assumed zero.) |

Example: in scene 0, if a Person is assigned obj_id = 5, then a Forklift cannot use obj_id = 5 (it must use a different ID, e.g. 6).

Archive the text file as track1.zip or track1.tar.gz before uploading.

Important note on the submission file:

- All floating-point numbers in the submission file must be rounded to two decimal places.

- The file size limit for each submission is 50 MB.

Evaluation

Scores are computed with 3D HOTA [1], which jointly balances detection, association and localization quality. HOTA score will be computed per class within a scene which will be averaged. A weighted average will then be computed on these scores across all scenes based on the total no. of objects. 3D IoU will be used for matching GT & prediction objects.

- Leaderboard = raw HOTA on the hidden test set.

- Online-tracker bonus: If your paper + code prove that only past frames are used, a +10 % multiplicative bonus is applied when deciding the final winner and runner-up (the public leaderboard itself shows the un-bonused score).

Example: Team A (offline) = 66 % HOTA; Team B (online) = 61 % ⇒ bonus → 67.1 % HOTA. Team B ranks higher in the final award list.

Data Access

Split | Hours | Cameras | Scenes | Resolution / FPS | Objects in Training GT* | File Size |

|---|---|---|---|---|---|---|

Train + Val | 28.5 | 342 | 26 warehouse layouts from both IsaacSim and CT2.5 | 1080 p @ 30 fps | 1,379 instances (901 person + service robots / forklifts / pallet trucks) | ~50 GB ( depth maps optional, ~3 TB) |

* Counts refer to the training + validation ground-truth only; the test split is hidden before the release of the evaluation system.

* Teams are allowed to train with 2024 and 2025 data, as well as external public data.

Each scene provides temporally-synchronized RGB video, camera calibration, a top-down map, and per-frame 2D/3D annotations. Depth maps (PNG-in-HDF5) are included but very large; feel free to ignore them if storage or I/O is a concern, and download only the other files using Hugging Face CLI.

By downloading you agree to the Physical AI Smart Spaces licence (CC-BY 4.0).

References

[1] J. Luiten et al., “HOTA: A Higher Order Metric for Evaluating Multi-Object Tracking,” IJCV, 2021.